Meta preparing to deploy four new homegrown chips to handle AI

Meta Platforms Inc. plans to deploy four new generations of its in-house artificial intelligence chips by the end of 2027 as the company turns to custom silicon to help power its rapidly expanding AI workloads.

Meta on Wednesday announced plans for the new chips – MTIA 300, MTIA 400, MTIA 450 and MTIA 500 – as a part of an effort to diversify its hardware sources, reduce reliance on outside chipmakers and bring down costs amid a fast-moving and expensive AI race. Meta will continue buying chips from other companies as well, and recently announced deals to spend billions on AI hardware from Nvidia Corp. and Advanced Micro Devices Inc.

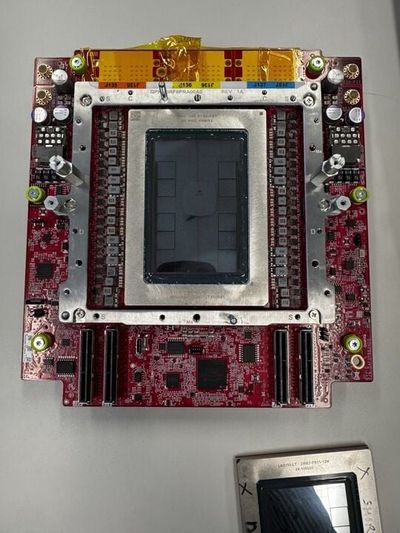

The MTIA 300 is already in production for content ranking and recommendations training, the company said, and MTIA 400, also known as Iris, has completed lab testing and is moving toward deployment. The MTIA 450 and MTIA 500 chips – code-named Arke and Astrid, respectfully – are scheduled for mass deployment in 2027.

Yee Jiun Song, Meta’s vice president of engineering, said that the products are being built in parallel, with the MTIA 450 model expected to arrive early in the year and the MTIA 500 slated six months later.

“If we look at overall AI development, I think even in the last two or three months things have accelerated at a pace that has kind of blown everyone’s minds,” Song said. “Silicon programs have to keep up with that evolution of workloads, so we’re constantly looking at our road maps and making sure we’re building what we think will be the most useful products.”

Meta is spending aggressively to build competitive AI models and products, which has led to unprecedented demand for computing power. Meta has turned to Nvidia and AMD to power some of its AI efforts, but has also worked to build out its bench of talent focused on chip design in hopes of developing its own products.

Last year, after Chief Executive Officer Mark Zuckerberg grew impatient with the company’s in-house progress, Meta tried to acquire South Korean chip startup FuriosaAI. After FuriosaAI rejected an $800 million offer, Meta instead acquired Santa Clara, California-based startup Rivos Inc., along with more than 400 of its employees.

The additional headcount has helped Meta’s in-house chips team, known as the Meta Training and Inference Accelerator, pursue several projects at once. MTIA is focused on building more efficient computing architecture for the company’s internal needs, which range from ranking and recommendations systems used to determine what content appears on users’ Instagram feeds to large-scale generative AI inference, where a trained model is used to generate text or images in response to a prompt.

While Meta executives have emphasized the benefits of the company building its own chips, it’s also one of the biggest buyers of graphics processing units, or GPUs, used for training and running AI models. Its recent agreements with Nvidia and AMD are worth tens of billions of dollars each, and mean Meta has locked in gigawatts of AI capacity over the coming years.

The strategy reflects the company’s dual approach of buying more traditional hardware from industry partners while continuing to invest in custom silicon for tasks that are more specialized to Meta’s platforms.

“We’re not building for the general market, so our chips don’t need to be as general purpose,” Song said. “We can cut out things that we don’t need, which really allows us to drive down cost.”

Still, the economics of chipmaking are challenging. Taking a product from the design phase to manufacturing by a third party – usually Taiwan Semiconductor Manufacturing Co. – can cost billions of dollars and take precious time. Song said it typically takes his team two years to go from design to production. Custom chips usually only pay off at scale and with high use rates.

Last month, the Information reported that Meta had scrapped its most advanced chip focused on training AI models, known by the code name Olympus, after struggling with its design, shifting instead to focus on a less complicated version. A Meta spokesperson declined to comment on the reporting, but said that the company regularly evaluates and evolves its silicon road map and learns from product deployments.

Meta Chief Financial Officer Susan Li said at a conference hosted by Morgan Stanley earlier this month that the company still aims to develop processors that can train AI models.